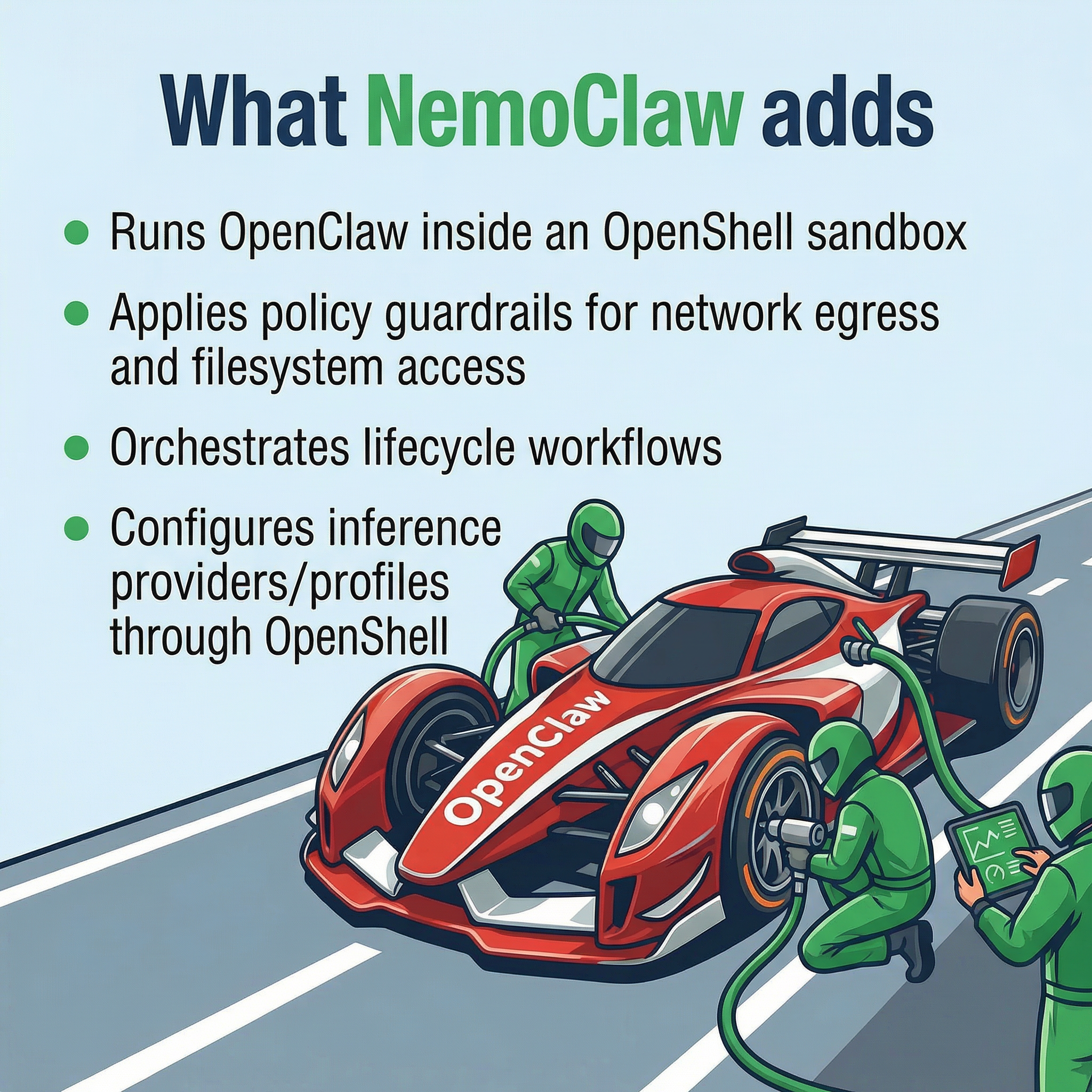

A lot of teams are experimenting with putting agents into production. Once you try to do that inside a real company, you immediately run into security, permissions, monitoring, and governance questions. NemoClaw looks like NVIDIA’s answer to that layer of the problem.

NVIDIA originally built its fortune on CUDA, the interface layer that made it possible for developers to program NVIDIA GPUs. CUDA turned GPUs from specialized hardware into a platform developers could build on.

If the next wave of software is programming AI agents, it makes sense that NVIDIA would want to be that foundational layer again, this time higher up the stack.

Important point: NemoClaw does not replace OpenClaw.

It extends it.

OpenClaw is the racecar.

NemoClaw is the pit crew.

The piece that makes NemoClaw interesting looks to be another new bit of tech, OpenShell.

OpenShell is a sandboxed execution environment for AI agents. It keeps agents isolated in their own containers, limiting access to files, directories, and network/internet endpoints.

It also handles model routing, which helps keep credentials away from local models while setting the stage for safely using remote models like GPT-5 or Gemini.

Interesting times. Is everyone now a manager, or is nobody a manager? One way to find out.